How Does Generative AI Change Video Production in 2025?

How will AI change video content creation? In this article, I share my predictions and best practices about how marketing, media, and communications teams can use AI to improve content velocity and reduce costs.

Companies everywhere are wondering how generative AI will change their creative workflows. Runway, Adobe Firefly, Veo, and Sora consume headlines with splashy demo reels. While these products are imaginative and stunning when they work, they're just the beginning of how generative technologies and LLMs impact video production.

At Kapwing, we've seen up front how marketing, media, and creative teams are putting these technologies to use. In this article, I'll share my vision on how genAI impacts the future of video production for other marketing, creative agency, media, and communication leaders.

This article is based on a talk I gave recently for a group of journalism and media professionals on the study of AI, WAN-IFRA World Tour.

Video edited on Kapwing

2025 Predictions for Video and AI

Content teams and individual influencers are on the creative treadmill with pressure to create more and better content. News teams lean more into breaking stories to capture attention on social media, but have to accelerate video content creation without sacrificing editorial quality to win. How will AI, LLMs, and generative tech empower global teams to create more content, faster?

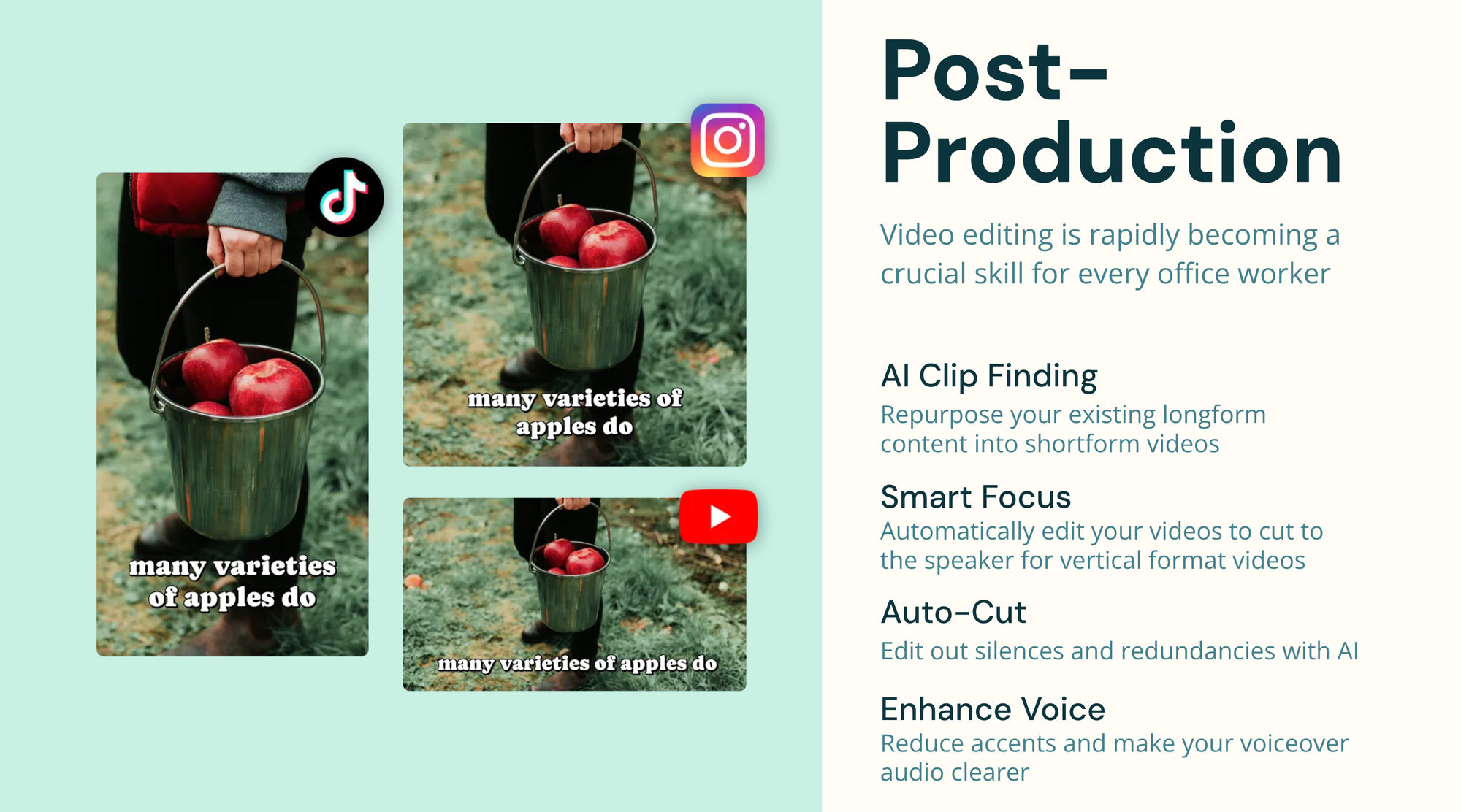

Faster Post Production

Step 1 is to identify the tedious, time-consuming, and expensive tasks that your team must undergo when publishing videos. Social media teams often complain about these tedious repeated tasks:

- Converting a landscape video to horizontal, focusing on the main speaker

- Transcribing a video for captioning

- Getting feedback from teammates in a different region or waiting for video production teams to make small tweaks

- Removing silences, stutters, and filler words for snappier dialouge

- Adding consistent brand elements, like outros, logos, etc

- Audio leveling and enhancing speaker quality

In the last two years, open-source technology and top vendors have improved transcription capabilities across languages. Because generative technology can detect the context, audio, and visual subject of a video, they can do more to transform projects intelligently.

Kapwing's Studio has 20 AI powered workflows, leveraging the best-in-class models for each one. We work with multinational companies every week to add new automations and integrations for their workflows.

Takeaways: Generative AI can automate more tasks which require context of the transcript, subject, or layout of the video. For example, Longhouse Media increased their video production 43% using Kapwing's AI suite.

Repurposing and Dubbing

Today, social media and marketing teams often have a huge existing catalog of content: webinars, training videos, presentations, lectures, interviews, recordings, etc. AI unlocks content repurposing so that more shareable videos can be captured from an existing media library.

AI Clipping is a huge timesaver for finding highlights in existing footage. Kapwing's Repurpose Studio leverages large language models (LLMs) to identify insights and stories within longer videos, centering on a speaker, and automatically clip videos for social.

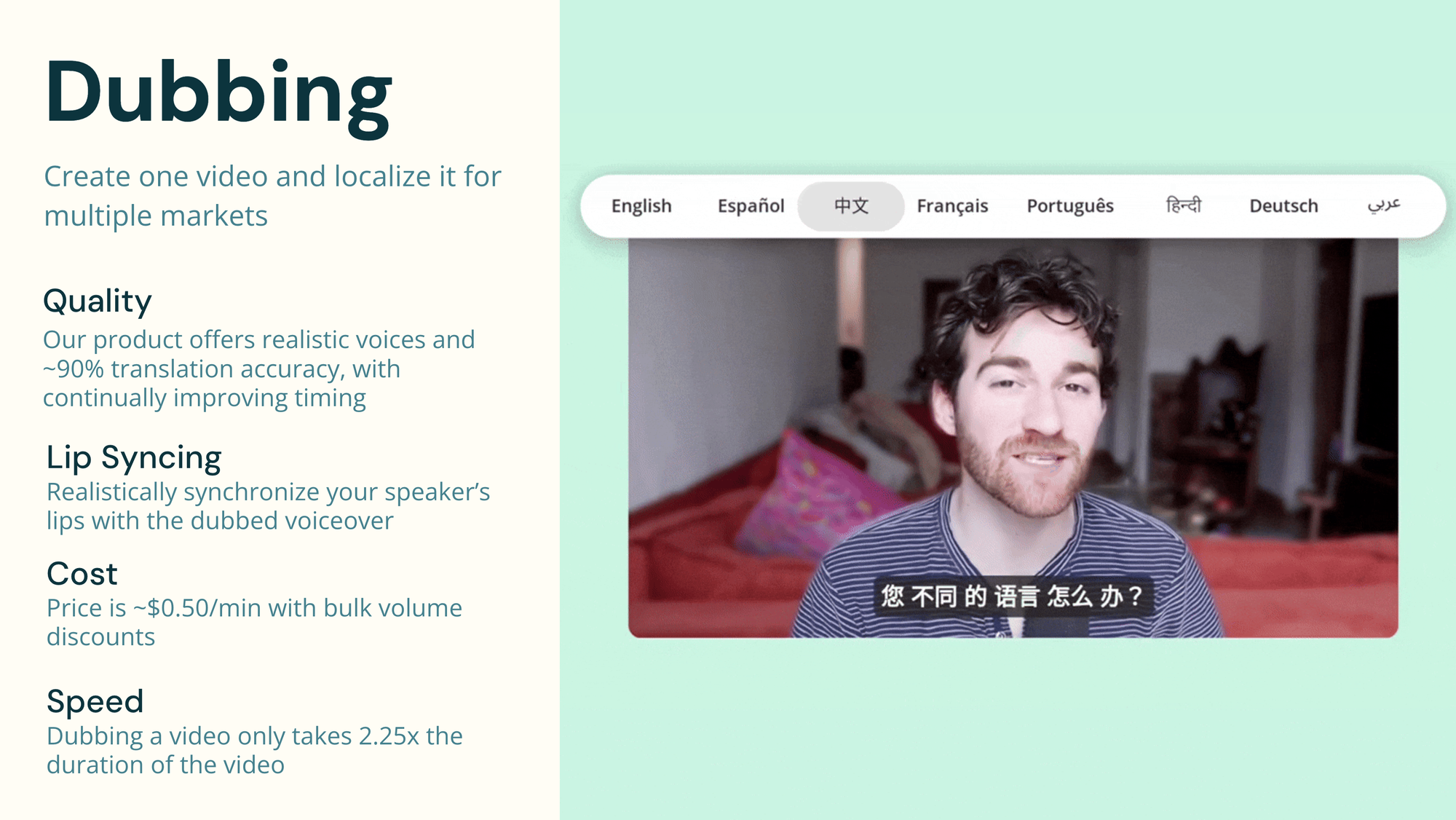

Generative AI has also made AI dubbing more realistic, much faster, and much cheaper. Videos can be created from existing content or scripts in a different language rather than making new content for each market. Instead of hiring a voice actor to translate a video into a new language, this dubbing can be done automatically using AI. For example, we recently worked with a major fitness company to translate their catalog of videos into 3 new languages.

Takeaways: Generative AI changes the way that teams leverage existing footage and content to produce more share-worthy social media video. For example, Brandon Arvanaghi of Meow grew his LinkedIn audience to 85,000 followers using repurposed material. To reduce the cost of content creation, get more out of your existing library through AI Dubbing and Clipping.

Script to Video

The next generation of media companies will come from teams who use AI Video Generators to transform written content into video automatically. AI unlocks Script to Video through realistic text-to-speech generation and visual matching of the script to relevant B-Roll clips.

Soon, media companies will be able to perform an AI-powered visual search across their media library to pull in clips relevant to a generated script. For example, a customer in the healthcare sector can instantly match medical terms from their script with relevant B-roll footage from their media library, speeding up the production process. Or, a journalist could convert an article to a video in one click. This script-to-video starting point can accelerate the amount of content creators publish.

In video editing software, users will be able to search stock media libraries or generate scenes inline to create new footage when needed. With Generative tools embedded into search workflows, adding imaginary scenes as B-Roll or an overlay can visualize abstract or fantastical ideas.

Takeaway: AI generated video clips augment your video catalog rather than replace it. Leverage AI to create scenes with needed, overlaid and side-by-side with your original footage.

Content Coaching

ChatGPT and similar LLMs can be an amazing coach for creators striving to hit a content creation goal. Use AI to brainstorm, get feedback on, and iterate on visual scripts, layouts, and strategies.

Video edited on Kapwing

Within Kapwing, creators can collaborate with an LLM to draft a script, using text prompts to refine. Once they have a final script, creators can record audio or a screencast inline or use an AI Perona to bring that script to life.

How to set a creative team up for success with generative AI

When the technology is changing so quickly, how do executives keep up with generative AI and develop a strategy for implementations? Here's the best practices I've learned from my interviews with leading media executives in 2025.

Learn more about the market and technology

There are hundreds of events and speaker series focused on applications of generative AI. This website, GenerativeAIinSF.com, for example, shows events based just in the Bay Area. Attend a conference or watch speakers on YouTube to learn more about the power of AI and how it's used to increase content quality and reduce turnaround time. Schedule discovery calls with companies who have tech you want to learn more about.

Example: The CTO of Forbes recently contacted Tess to learn more about image generation licensing. He's interested in an Enterprise model which is both high-quality and commercial safe.

Research leading models

Rather than relying on demo reels which often over-emphasize the quality of AI models, ask vendors to provide a free trial and assess the quality yourself. Be wary of demos which introduce "movie magic" to process your input data.

Example: Recently, we worked with ABS-CBN to improve Kapwing's Tagalog transcription model using fine-tuned Whisper data. The Filipino media conglomerate uses this custom whisper model to transcribe their videos in-house.

Host an AI "Hackathon"

Regardless of if you employ engineers at your company, a "hackathon" - 1-2 day event focused on experimentation and prototypes - can be a fun way to encourage your team to embrace and explore new AI applications.

Example: Kapwing hosted a hackathon in fall of 2023. The prototypes became the basis of our AI Video Generator, which now serves about 15,000 creators each week.

Embed models into one platform instead of buying individual AI tools

Generative AI can get expensive if you have one-off subscriptions for each service. In addition, the models are changing and improving quickly, so companies that make an annual commitment for one service may lose money or miss out on the best model.

Instead, find ways to embed Generative AI plugins, tools, and automations into the tools you already use. Partner with startups or applications which evaluate multiple models rather than building their own. This approach is both cheaper as multiple AI vendors are bundled into one subscription, more flexible, and higher quality as new vendors will be added as they emerge and models can be used in a pipeline.

Example: Kapwing brings together best-in-class vendors. For example, we have Pika embedded for video generation and DeepL for machine translation, and we sometimes do custom work to embed a new model for an enterprise customer.

About Kapwing

Founded in 2018, Kapwing began with a focus on video production. Today, we’re a collaborative editing platform that empowers creative teams to work together seamlessly from anywhere, all within the browser.

Our customers are media companies, marketing teams, individual creators, SMBs, and communications professionals. Kapwing serves people with a story to tell, using video as a medium.

Rather than building AI models ourselves, we use the best-in-class models for each step in the workflow. For example, our dubbing pipeline uses:

- ElevenLabs for realistic text to speech voices

- Assembly, OpenAI's Whisper, Deepgram and Rev for transcription

- DeepL and Google Translate for video translation

- Texels, SyncLabs, and Sieve for lip sync

...and we're constantly evaluating new vendors against the existing ones. Our focus is on how we can make these technologies useful for teams. Reach out to us for a demo or to learn more!